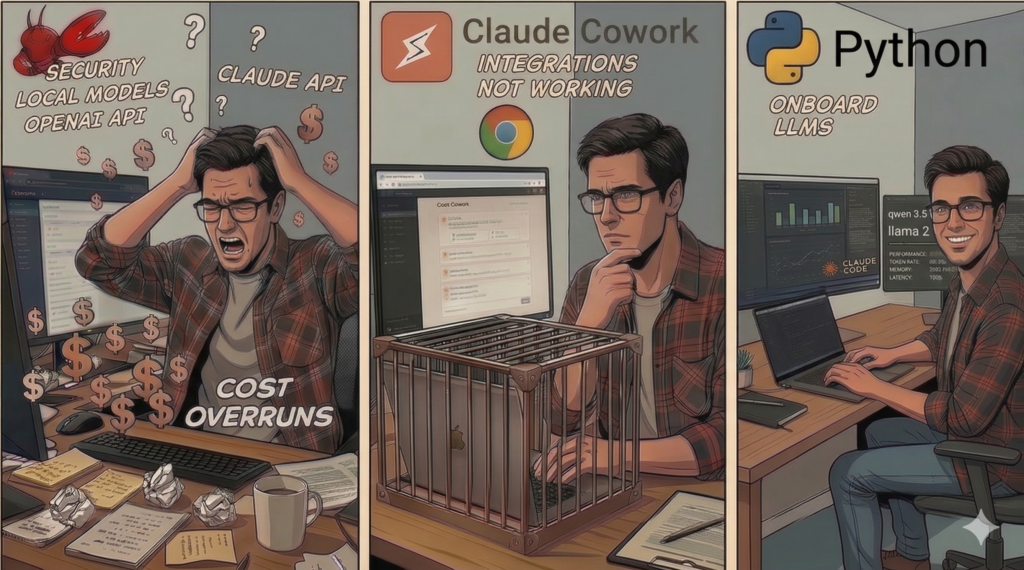

My journey using OpenClaw, Claude Cowork, and eventually Python to complete what I thought was a simple task...

My Goal:Build a smart content aggregator that doesn’t suck:

- Scan my inbox → find newsletter subs → extract the actual signal from the noise

- Hit my RSS feeds → grab relevant articles → summarize what matters

- Merge everything → kill duplicates and spam → output one clean daily page worth reading

- Run it for pennies → because I’m cheap (see: miser)

Open Claw - The Contender

I figured I’d see what the hype was about.

Everyone was losing their minds over OpenClaw—supposedly powerful, dead simple, the whole package. Based on what I’d seen, this should’ve been a cake walk (security mess aside, but whatever, I knew what I was signing up for).

The Setup

Dusting off the old warhorse.

I had an Asus ROG Strix Scar II with an RTX 2070M collecting dust—leftover from my OCR model training days (Keras/Python) and definitely some Rainbow 6 Siege and Halo Infinite. Thing was fast once upon a time, and it was just sitting there. Perfect guinea pig.

Lockdown first, questions later.

Security paranoia kicked in immediately:

- New local account, completely isolated

- Shadow email for newsletter subs

- OpenClaw installed with every security setting cranked to max—tested thoroughly, locked down tight

- Discord integration on a private channel (worked, but created separate threads—annoying, not what I wanted)

- OpenAI API key with $10/month cap

- Google API + “gog” configured for shadow email access

- Fed OpenClaw my instructions, let it ask clarifying questions to dial it in

First flight: expensive.

After wrestling “gog” into submission, I ran it on a small sample of emails and RSS feeds.

I swear I heard a cash register dinging in the distance.

First run? Almost $3. For a subset of the feeds. At that rate, I’m looking at $90+/month. Absolutely not happening. Time to get creative.

Switching To Anthropic

Attempt #2: Anthropic to the rescue?

Switched to my Anthropic API key—Sonnet for thinking, Haiku for summaries. Testing revealed some wins and some… problems.

The good:

- Faster execution, better output quality

The bad:

- Still not cheap. Cost barely budged.

- API throttling jail. My tier had strict rate limits. I wasn’t careful. Account locked for what I thought was 24 hours (actually 2, but I didn’t figure out the fix for a full day—so, effectively worse).

- Haiku wasn’t actually running summaries. Traces showed OpenClaw ignoring my instructions, still burning expensive tokens on summarization.

- No consistent control. Tried multiple approaches to force the big model only where needed. Traces said otherwise. The bill confirmed it.

- Cron timing was a mess. 8AM runs were wildly inconsistent. Initially blamed the laptop sleeping, but even when awake I’d have to trigger jobs manually half the time.

Bottom line: Still hitting ~$1.30/day. Frontier models were out.

OLLAMA to the Rescue?

Attempt #3: Going local with Ollama

I’d been running Ollama on my Mac for a while—maybe local models could save me. Tried a split setup:

- Asus laptop: Installed Ollama + a few sub-8B models. Llama3.1:8b handled summaries decently, though slow—Nvidia analyzer pointed to data movement, not compute.

- Mac M4 (36GB unified memory): Loaded qwen3.5:35b-a3b and qwen3.5:27b. Manual tests through Ollama’s UI looked promising—seemed pretty snappy.

- OpenClaw config: Two Ollama hosts (local PC, remote Mac). Tweaked prompts to route tasks correctly—took some trial and error.

Reality check: The Mac choked…. or?

Remote Ollama was painfully slow under OpenClaw—constant timeouts. I gutted the context files (SOUL.md, MEMORY.md, IDENTITY.md) down to ~1/3 their size to reduce overhead. Barely helped.

Plan B: Smaller model.

Dropped down to deepseekr1:8b with its different chain-of-thought approach. Required custom OpenClaw instructions to integrate properly. It could finish planning and connections… sometimes. Inconsistent results. Still timed out or gave generic garbage responses.

The verdict:

Only the $$$ frontier models worked reliably. Which defeated the whole point. Back to the drawing board.

Claude Cowork - The Savior??

Attempt #4: Claude Cowork—too good to be true?

I’d been sitting on a Claude Max Plan for months doing open source work—barely cracking 60% usage most weeks. Then Cowork dropped.

Light bulb moment: use my existing subscription to do the heavy lifting. Process the feeds, build the report, drop it in my local git repo, publish to my site. Done.

Seemed almost too easy…

The Setup

Setup: suspiciously smooth.

Spun up a new Cowork task. Described the goal—where to pull content, where to dump the HTML, how to commit and push.

Cowork’s response? “Yep, looks simple.”

Started churning. Asked a few clarifying questions. Seven minutes later: complete SKILL.md with step-by-step instructions.

The Limitations

Reality: death by a thousand constraints.

Hit limitations immediately—and more after every “fix.”

Problem 1: Email connector cross-wired. Needed to connect to my shadow email, but it locked onto my primary account instead. Not fatal. Swapped it over, tried again.

Problem 2: Docker lockdown hell. Cowork runs in a brutally sandboxed container. Turns out it can’t do most of what I needed:

- No direct RSS access. WebFetch blocked on external URLs. Claude suggested workarounds, then admitted none would work.

- Chrome extension fallback. Open each feed in a browser tab (via Claude for Chrome), scrape from there.

- Slow and flaky. Even opening 5 tabs at once was painfully slow and unreliable. Tried delays, waiting for full page loads, everything—didn’t matter. Regular runs would miss feeds and just list them as “skipped” at the bottom.

- No git operations. Couldn’t commit or push. Suggested solution? “Here are the commands—copy/paste them yourself.” Completely defeats the point.

- Zero learning. Hit the same issues every run. No memory, no adaptation. I had to re-educate it every single time.

- Token drain. Process took ~15 minutes and burned 40-50% of my session limit. Left my mornings crippled—couldn’t do real work without hitting the 5-hour cap.

Attempt 5: Local web server.

Fed up with Chrome errors, I built a Flask server with APIs to:

- Pull all feed data fast

- Handle git commit/push via endpoint

Tested it. Worked perfectly. “Brilliant!” I thought.

Hubris, meet reality.

Told Cowork to use the local service. It congratulated me on my “novel approach,” (was it taunting me?) rewrote the script, ran it… and immediately crashed. Can’t use WebFetch on localhost. No bypass. No exceptions. Are you kidding me?

Plan C: Chrome for everything.

Told it to hit my local server through Chrome tabs. This finally worked, but now I’m running a server just for a 10-minute job, watching it spam my browser with tabs, and it still doesn’t run on schedule half the time. Had to babysit it every morning.

Frustrating doesn’t cover it. Time to pivot. Again.

Claude Code + Python - Tried and True

Attempt 6: Screw it, I’ll just code it myself.

Fed up with Cowork’s limitations, I went back to basics: have Claude Code generate a proper script.

After some back-and-forth and local model testing, here’s what I built:

- No LangChain. This was simple enough—straight Python.

- RSS scraper: Processes 5 feeds at a time. Minutes faster than Cowork ever was.

- Ollama on my Mac: Loaded a few models to test the workflow.

- Orchestration: qwen3.5:35b-a3b. Handles the logic, combines everything, generates the final HTML from a template. Same model OpenClaw choked on, but ran way better directly from Python. Pretty sure OpenClaw’s plumbing was the problem, not the model.

- Summarization shootout:

- llama3.1:8b: Solid summaries, blazing fast (<2 sec each).

- gemma4:e4b: Better quality, but slower. Stuck with llama.

- Content filtering: Added classification rules to auto-kill ads and non-technical fluff. Model evaluates each piece against the rules—keeps the feed clean.

- Parallel processing: ~140 summaries per day. Running 2 sub-8B models in parallel—fits easily in my Mac’s RAM.

The result:

Fast. Clean summaries. Well-formatted report. All local. All open-weight models. Fan spins up, sure, but it works. Might need some error handling over time, but I’m happy.

Finally.

Conclusion: Lessons Learned, $$$ Saved

Interesting experiment. Got to kick the tires on some hyped tech.

OpenClaw: Couldn’t get consistent runs, botched the Ollama integration, scheduling was flaky. Maybe more tuning would’ve helped—or waiting for recent updates that supposedly fix Ollama integration. But Discord/Telegram job triggers are overkill for my needs, and the security model made me deeply uncomfortable. Pass.

Claude Cowork: Understood the task immediately, set up the process perfectly… then hit a wall. The guardrails are welded on. No “I know what I’m doing” override. Even with workarounds, the Docker sandbox killed it. Unusable.

Claude Code + Python: For a process like this? Code still wins. Is it bullet-proof? Can I trigger it via Discord? Do I care? No, no, and no. It works. That’s enough.

The verdict:

The burden of proof is on OpenClaw and Cowork to justify their existence. Sure, they probably work fine if you’re willing to burn through frontier model credits. I’ll keep experimenting as they improve. Maybe someday I can migrate this back into one of these tools without hating my life—or my bank account.

Until then? Local models, Python scripts, and my wallet stays intact.

Stay curious. Keep shipping – KF